At the corporate level of design, small changes have a significant impact. For that reason, any change is tested, analyzed, researched, tested again, etc. This is because a simple change can influence millions of dollars in revenue. Nicole Maynard, head of UX for Hyatt says:

“One small change can take months before we place it on the site because we need to understand what kind of effect this change has on every user.”

While Hyatt.com tends to focus on conversion rates, Facebook focuses on interaction. Touting over 1.94 billion monthly active users for March 2017 (Source: Facebook 5/3/17), Facebook has become more than just an application—it’s a system that has created cultural shifts in our society through one piece of functionality: to create a common place to share content.

The Share Button

The “Share” button was created in the interest of providing a better user experience —to help people easily re-distribute content they found engaging.

At it’s most common level, this is a perfectly reasonable level of progression for any application.

Cause: You notice a lot of people are posting the same article.

Effect: You create a way that allows people to easily share another person’s piece of content with their own group of friends.

For a social behemoth like Facebook, changes at this depth are among the first of its kind. In the future, Facebook will be viewed as the dinosaur of its day. Trudging its way through the initial hurdles of design and functionality at a seemingly enormous scale.

As we shift from the “digital world” to the “digitally assisted world”, it’s important to understand the social impact application design can have on society. In this case, the Share button’s ability to quickly propagate incorrect, hateful, or misleading content amongst a large set of individuals.

Massive Misinterpretations

There was a segment on HBO’s “Last Week Tonight with John Oliver” about the negative effects of poorly performed scientific studies. How, when things aren’t fact checked, or they’re misinterpreted, it can lead to a string of incorrect information.

That incorrect information can fool an entire nation of people. The effects of social proof can be found in Dr Robert Cialdini’s book, Influence: The Psychology of Persuasion. In it, he says:

When people are uncertain about a course of action, they tend to look to those around them to guide their decisions and actions. They especially want to know what everyone else is doing — especially their peers.

Meaning we look for social cues on how to act. It’s a type of monkey-see, monkey-do situation. The problem is that this type of social justification can be extremely dangerous.

In one story, Dr Cialdini notes the Jonestown, Guyana mass suicide of 1978. By controlling social dynamics, leaders of the group were able to control people’s actions. With this, members of the group easily accepted instructions and explanations from their leaders—as long as it is a consensus within the group.

At it’s core, it’s about the misrepresentation of information. Something the “Share” button encourages. This functionality makes it easy to promote false content—sometimes unknowingly.

Facebook Made It Easy

With over 4.75 billion pieces of content shared daily (Source: Facebook, as of May 2013) people around the world are being presented with new ideas from family & friends. When Facebook updated it’s external link integration, suddenly non-credible sites began to look credible.

People began to post articles from sites they’ve never heard of. They would share a link on a friend’s page because the headline sounds like something they would like. Hell, people even read blogs on Medium.com and post them because we agree with something the author is saying.

From the age old discussion of fact checking, we fall into the same trap of information consumption. Articles posted to Facebook are rarely researched for organizational credibility, background of the person writing it, or what other details may be necessary to the full narrative.

These unchecked sources now have the ability to appear as “truth” to active users, as well as the five new profiles being created every second.

Intentional Habit Design

While Facebook has taken measures to fight fake news, the ease in which Facebook allows us to interact has become an automated process in human behavior. This is not an accident—this is by design.

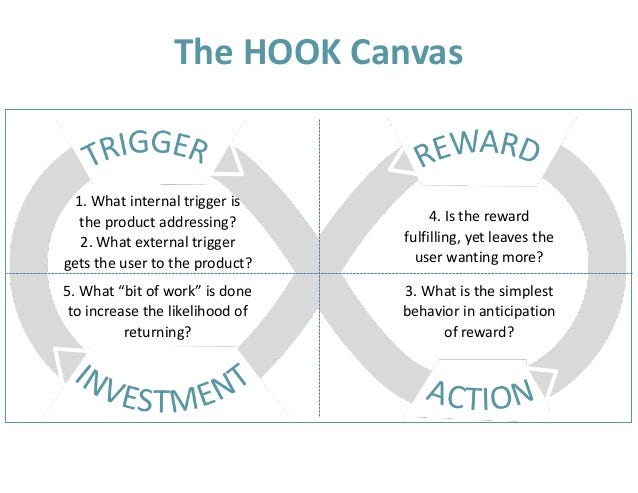

Author and researcher Nir Eyal presents the components that create a psychological “hook” in his book Hooked: How to Build Habit-Forming Products.

In its simplest form, a hook can be thought of as:

An experience designed to influence a user to interact with your platform often enough to form a habit .

This type of functionality stands at the core of any addiction dependent company. And to maintain it’s 18 percent increase year over year (Source: Facebook 5/3/17), do not doubt that Facebook is an addiction dependent company. They make more money with the more time you spend using it. So why wouldn’t they create a system that’s designed to keep you in.

The Dangers of Designing for Simplicity

Facebook has unintentionally allowed the world to recycle a stream of irresponsible, influential articles. They have done this with an amazing team of developers who have done a fantastic job of making it easy to post great looking links to articles.

With that, hats off! Good job guys!

When we take a look at how people are using Facebook to interact within their own spheres of influence, we can start to see some patterns emerge. In posting an article to Facebook you do not:

- Have to read the full article

- Have to worry if it’s true

- Have to fully agree with it

You just have to hit “share”. In doing so, this one simple action has helped push that information closer to being perceived as truth through social proof.

If we removed the ability to post links on Facebook:

- People would need manually recap an article to share an idea

- False articles and misleading information would decrease in circulation

- Click bait articles, and the sites that thrive off of black hat advertising techniques, would decrease in their advertising revenue

Socially Responsible Design

The issue is larger than Facebook. So while I do understand asking Facebook to remove the “Share” button is unrealistic, I hope to have this article serve as understanding of the impact designers can have on the world — on any project.

On average, the Like and Share buttons are viewed across almost 10 million websites daily (Source: Facebook as of 10/2/2014). While the projects we work on may not be to that scale, it’s important to understand the affect it does have; even if it’s just 1 person.

As a designer, I believe we all have a responsibility to examine the full effect of our actions. Every element we put into the world is something we create into existence, and what we create has the ability to influence and inspire others.

To build a better future, our mode of thinking cannot be limited to human-computer-interaction. We need to look further. We need to understand how our choices affect the person behind the experience, not just the “user”. Thank you for reading.